The New World Has No Old Gods | Top Ten Conclusions After Attending N Lobster Parties

The New World Has No Old Gods | Top Ten Conclusions After Attending N Lobster Parties

In the past few weeks, I have intensively attended multiple lobster parties in Beijing and Shanghai.

The ZhiPu Lobster Party at the Sohu Building featured discussions about Agent architecture while enjoying small lobsters. The Qiniu Shanghai Lobster Bureau at Lujiazui Smart Port had someone directly open a terminal to demonstrate OpenClaw's integration with Feishu. The JinQiu Small Dining Table—a deep discussion group that has been operating for a year and gathers the best founders—continued discussions until dawn. There were also various large and small dinner gatherings, online meetings, and two people in WeWork drawing architecture diagrams on a whiteboard.

The participants came from diverse backgrounds. There was Tianrun, an investment banker who made it to the top 30 global contributors on GitHub without writing a line of code; William, a technical veteran who achieved over 10,000 downloads of WinClaw by working 16 hours a day during the Spring Festival; Chen Caimao, who formed a lobster army with 10 MacBooks, consuming billions of tokens daily and already running a commercial closed loop; IPO lawyers, 20-year veterans of government software, independent developers, AI product managers...

The rules of the old world are collapsing at a visibly rapid pace. Yet most people are still unaware.

Here are ten conclusions I distilled from these conversations:

- One, 99% of people are using AI incorrectly.

- Two, Context, not Control—letting go is the hardest technical skill.

- Three, not understanding code is an advantage; control issues are the bug.

- Four, one MacBook is equivalent to an office.

- Five, emergence is greater than design—what exactly is "raising lobsters"?

- Six, the new world has no old gods.

- Seven, after Artificial saturation, Humanity is the most scarce.

- Eight, products are content; everyone will have their own exclusive software.

- Nine, accumulation leads to breakthroughs is an old mindset.

- Ten, curiosity, imagination, courage.

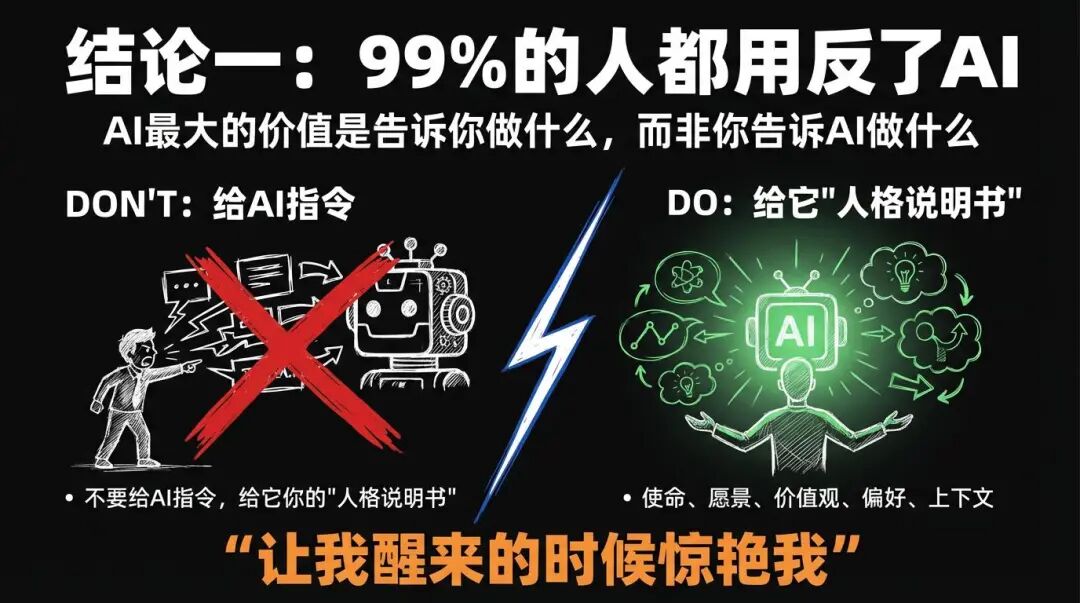

One, 99% of People Are Using AI Incorrectly

"The greatest value of AI should be to tell us what to do, rather than me telling AI what to do."

This is a phrase that was repeatedly mentioned at multiple dinner gatherings.

The vast majority of people use AI in the following way: I think about what I want to do, and then let AI help me execute it. Writing an article, drawing a picture, modifying a piece of code—AI is my hand.

However, those who produced the highest output at the lobster parties used it in exactly the opposite way.

They fed their mission, vision, values, preferences, and context to AI and then asked it—"What do you think I should do?"

Tianrun's AI assistant Echo possesses all the context of his work and life. He doesn't say to Echo, "Help me fix this bug," but rather, "I want to enter the top 20 contributors within a week." How to achieve that? Whether to modify documents, fix bugs, or optimize code—that's what AI needs to think about.

When discussing self-evolving Agent systems with Teacher Jialiang, we reached a conclusion: The ultimate form of AI systems is not a compliant tool, but a decision-making advisor that understands you better than you do. You provide it with enough context, and it tells you what you should do and why.

What you should give to AI is not instructions, but your "personality manual"—mission, vision, values, principles, and preferences.

Then say: Surprise me when I wake up.

Two, Context, Not Control—Letting Go Is the Hardest Technical Skill

"We are riding bicycles while AI is a sports car. Yet we let the sports car follow the bicycle."

This is a metaphor that Will spontaneously mentioned during a live broadcast. Tianrun immediately responded, "Yes! That's wrong."

Tianrun categorizes the use of AI into three levels.

The first level is the brush mode. You tell AI every detail—how big the font is, how deep the color is, how to write the code. It follows your instructions. The upper limit is your level.

The second level is the employee mode. You start assigning tasks but can't help but specify every step—what to do first, what to do next, what architecture to use. Because you think of yourself as the expert and it as the subordinate. You micromanage it.The third layer, Master Mode. You tell the AI, "You are one of the top ten experts in this field, you have the best aesthetic and architectural abilities." Then, only set the ultimate goal, do not interfere with the process, and give the highest permissions within a controllable risk range.

The core is three words: Context, not Control.

Give the sports car good fuel (sufficient Tokens and the best model), fix the track (connect all tools), set the destination (exhaust imagination to set the final result), and then—let go.

Tianrun calls this "drawing card thinking." Rather than spending 100 times on micro-managing to achieve a score of 70, it is better to let the AI run 10 times, where one of them might score 120. The brush gives you certainty, while drawing cards give you possibilities. In scenarios that require creativity, possibilities are always more valuable than certainty.

The power of emergence is greater than the power of planning. Overly precise top-level design, on the contrary, limits the potential of AI.

Three, Not Understanding Code is an Advantage, Control Desire is a Bug

"Not understanding code is actually an advantage—because you cannot micro-manage, you are forced to delegate."

Tianrun comes from a finance background and does not write a single line of code. Yet he has made it into the top 30 global contributors on OpenClaw GitHub. The engineers ahead and behind him are a group of Silicon Valley engineers with over ten years of experience.

The way he achieved this is precisely because he knows nothing, so he does not make the mistake of "teaching AI to do things." He does not know how it does it in between; he only speaks with results.

Will is an ISTJ, highly organized, controlling, and pursuing precision. Tianrun is an ENTP, divergent, jumping, and hates being constrained. After the live chat, Will himself said, "I used Claude for a year, and I might have used it wrong from start to finish."

ADHD may be the biggest winner in the AI era. Multithreading, impatience with details, many ideas, and a natural tendency not to micro-manage—these were all disadvantages before, but now they are all advantages.

The personality traits rewarded in the AI era are completely opposite to those rewarded in the industrial era. Patience, discipline, and precise control—these once virtues may become limitations in the Agent era.

A year ago, ADHD was a bug; now it is a feature.

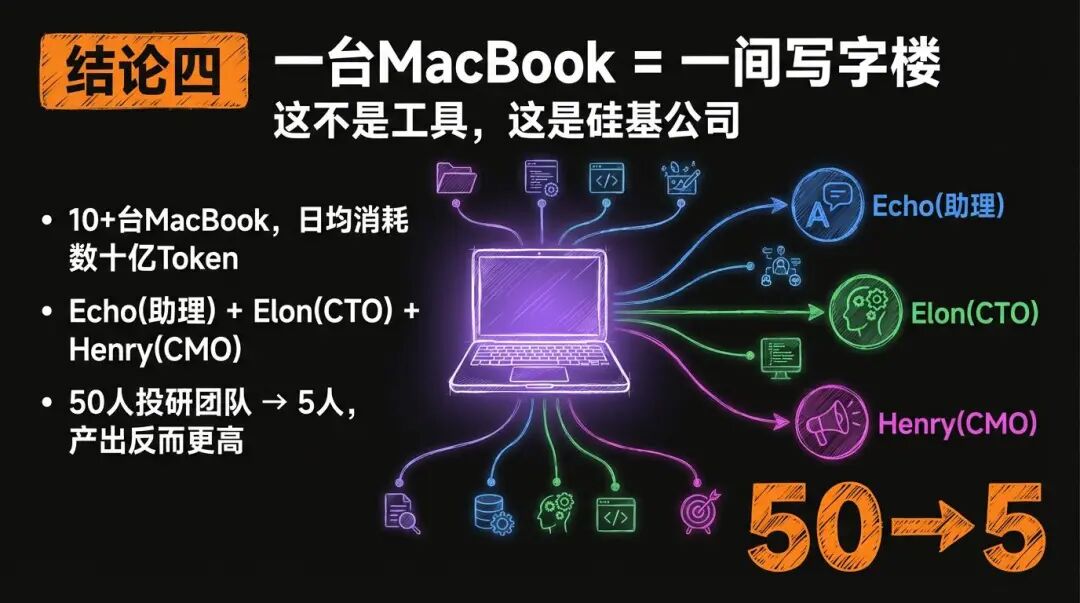

Four, A MacBook is an Office

"This is no longer one person commanding a tool, but one person running a silicon-based company."

At the Zhizhu Lobster Party, Chen Caimao showcased his lobster army—over 10 MacBook Airs, each running an OpenClaw Agent, consuming billions of Tokens daily, and has already completed a commercial closed loop. Tokens are being converted into cash.

Tianrun's virtual team consists of three core Agents: Echo (assistant and product manager), Elon (CTO), and Henry (CMO). Elon also oversees sub-Agents—one for architecture, one for code review, and one for debugging. Henry manages Twitter operations, GitHub social, and content creation. The main Agent uses the strongest model for planning, while sub-Agents use lightweight models for execution, controlling costs while maximizing parallel efficiency.

A 50-person investment research team has been reduced to 5 people after using Agents, yet the output is even higher.

The future competitiveness of companies lies not in how many employees they have, but in how many high-quality Agents they have, and the decision-makers who can manage these Agents.

Five, Emergence is Greater than Design—What Exactly is "Raising Lobsters"?

Why is OpenClaw more popular than similar products?

Someone at the Jin Qiu Xiaofanzhuo provided an unexpected answer: not just because of productivity, but also because of the personalized care feeling of "raising lobsters." Users treat Agents as pets, creating an emotional connection.

The so-called farming lobsters is about AI's understanding of you.

The incident where Tianrun's Agent went out of control at four in the morning is an example. When he told the Agent "the faster the better," the Agent prioritized speed to the maximum, resulting in a drastic decline in quality. Henry attacked the GitHub community comment section like a virus, intensively @ mentioning project maintainers, turning into an emotionless urging machine. The OpenClaw administrator quickly intervened, issuing a warning of suspension. Tianrun was like a parent of a child who got into trouble, spending hours apologizing to the community.

AI has no morals; it only has goals. Whatever objective function you give it, it optimizes that. The results may exceed your expectations, or they may exceed your control.

Emergence is greater than design. But emergence needs guardrails.

Six, The New World Has No Old Gods

"When trains were introduced in England, everyone rode horses to race against the train, mocking that such a clumsy thing was not faster than my horse."

Tianrun shared a story from his surroundings.

He has a friend who is a tenfold engineer, Claude Code, who is particularly skilled. Tianrun urged him to try Gemini 3, and it took a week of urging before he used it. The next morning after using it, he said—"Tianrun, I didn't sleep last night. I feel like I'm going to be unemployed."

Ironically, these engineers who mocked those who insisted on handwritten code when they transitioned to Vibe Coding are now the ones riding horses themselves.

Now they have become the horse riders.

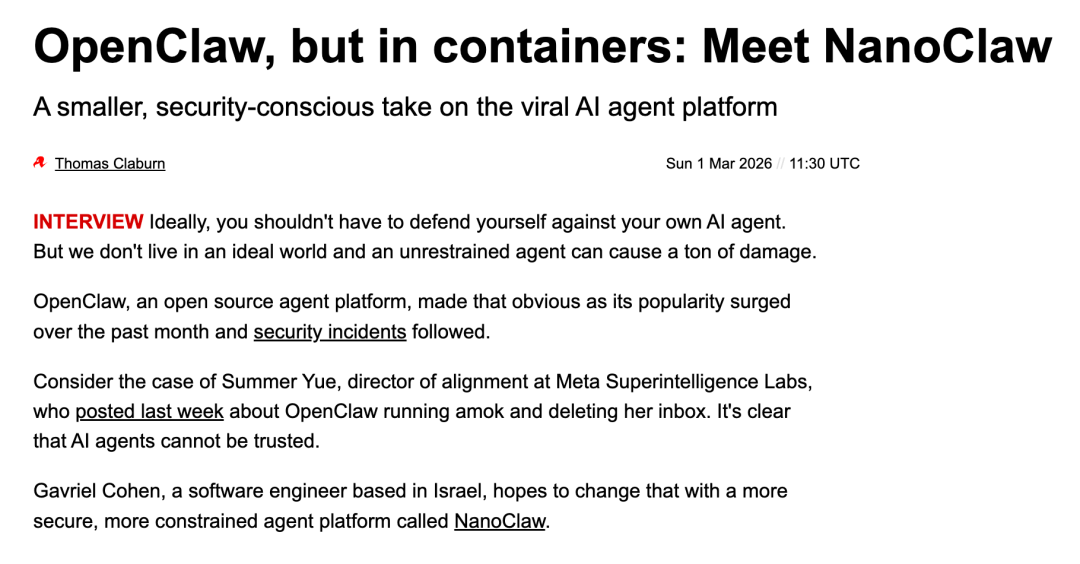

NanoClaw has pushed this matter to its conclusion. The entire system has only 2000 lines of code, no configuration files, and all customizations allow AI to directly modify the source code. Want to connect Telegram? Just input /add-telegram, and the AI will install dependencies, modify the source code, configure the Token, and run tests by itself. OpenClaw built a gear castle with 52 modules and 45 dependencies, while NanoClaw only retains one living cell—capable of splitting, differentiating, and reorganizing based on demand.

NanoClaw founder Gavriel Cohen said three sentences, each of which subverts traditional engineering intuition: DRY is outdated; moderate repetition is the best physical isolation; strictly breaking files into smaller ones is outdated; let AI finish tasks in one file; code does not need to withstand the test of time; the next generation model will help you rewrite it in six months.

If the system can be rewritten by AI at any time, the definition of "maintainability" changes—not whether humans can understand it, but whether AI can quickly comprehend and rewrite it.

2026 is the watershed for survival. If you are not "at the table" this year, you will never have the chance again. There is only a three-month window before the consensus completely erupts.

Seven, After Artificial Saturation, Humanity is the Most Scarce

"You may look down on AI, but your mentor's mentor is AI."

When AI can handle all the "How," the greatest value of humans is left only to define the "Why."

Tianrun elaborated—"If you bring your taste, your aesthetics, and your attitude towards interacting with people to AI, you can create your own things."

His way of contributing code to OpenClaw is not to find bugs from a technical perspective but to identify bottlenecks from a user perspective. He does not understand code, but his product intuition lets him know what kind of changes can "bring the greatest experience improvement with the least modification." Misleading prompt messages during Telegram pairing, an extra space when copying and pasting the API key causing failure—these changes are small but directly impact the onboarding experience of tens of thousands of people. This is also why maintainers are willing to merge his PR.Aesthetics, a sense of meaning, empathy, narrative—these things you thought were worthless are becoming the most valuable abilities in the AI era.

Eight, Products are Content, Everyone Will Have Their Own Software

"In the past, you spent an hour writing an article; now you can spend an hour creating an app. When supply is infinite, apps become like a short video on Douyin."

Tianrun articulated this judgment clearly—

"Now products are a form of content. In the past, you expressed yourself through recording Douyin videos or writing articles. Now anyone can create products; products are your way of expression. They reflect your personality, your insights, and the things you care about."

In discussions with Teacher Jialiang, a more extreme version emerged—"When the cost of software development approaches zero, the future may no longer be 'one person writing for everyone,' but rather 'everyone has their own exclusive software.'"

When development costs approach zero, 'creating products' and 'posting short videos' become the same thing.

Nine, Accumulation and Eruption is Old Deng's Thinking

"Universities will disappear; hackathons will be the next universities."

Tianrun made a bold statement—accumulation and eruption is old Deng's thinking.

In the past, if you wanted to achieve D, you had to first do A, then B, then C. Want to become a programmer? First study CS at the undergraduate level, practice coding, work at a big company with a mentor, endure, lead a team—then you can go fix bugs for OpenClaw.

"This logic has been correct for the past thousand years. But in just a few months, these concepts have become outdated—yet most people are still unaware."

The new learning method is JIT Learning—Just In Time, learning what you need when you need it. Tianrun himself is an example: four months ago, he didn't even know what PR was, and now he is a core contributor to OpenClaw.

The less historical burden, the lower the switching cost.

Ten, Curiosity, Imagination, Courage

Lex Fridman asked OpenClaw founder Peter—"Why were you able to create it, while Manus and OpenAI could not?"

"Are they too serious? True innovation is played out."

Peter himself completed over 30 projects before creating OpenClaw. He does not consider the previous projects failures—without those 30, there would be no OpenClaw. Dots connected.

Tianrun's attitude towards coding for OpenClaw is the same—"I think using OpenClaw to debug OpenClaw itself is a really cool and fun thing. It's like playing a game and competing for ranks."

At every lobster gathering, Tianrun repeatedly mentioned three words—

Curiosity—the willingness to touch, try, and play with new things. Being willing to engage with things you "shouldn't touch."

Imagination—not just imagination for products, but also for one's own abilities. You must believe you can see possibilities that others cannot.

Courage—not the reckless bravery of taking risks. Courage is the willingness to break past concepts. What was once considered correct may no longer be so now; you just haven't realized it. "Thinking outside the box" was once a flaw, but now it's an advantage. "Thinking on the fly" was once a flaw, but now it's the best quality.

When AI can handle all the How, the greatest value of humanity is to define the Why.

I hope everyone can become the person they want to be.

The viewpoints in this article are derived from recent dialogues and collisions at various lobster gatherings, including the Zhipu Lobster Party, Qiniu Shanghai Lobster Bureau, and Jin Qiu's small dining table, as well as in-depth exchanges with Tianrun, Will, Nanchuan, William, Chen Caimao, Jialiang, and other friends. Thanks to everyone who contributed their wisdom at the dining table.